Modular WebGL with PEX

This is a transcription of the presentation I gave at WebGL Workshop Meetup in March'16 in London organized by Carl Bateman.

You can click on any of the slides to see a bigger version.

Hi, today I would like to talk about PEX - a WebGL library we are developing at Variable. We will see how it compares to three.js and other libraries and what are the benefits of using Node.js/npm ecosystem.

PEX is not a single library but an ecosystem of modules working together. They will handle common tasks like creating window / gl context, loading 3d models etc. PEX can run on the Desktop or in any browser (like Chrome, Safari, Firefox, Edge etc) that supports WebGL.

Enter Plask - a multimedia programming environment developed by Dean McNamee.

Plask extends Node.js:

- An implementation of WebGL spec on top of OpenGL for 3D

- Skia Graphics Library (from Android) for 2D

- We have PDF output for vector graphics

- Syphon for A/V software compatibility

- Audio / Video playback, MIDI support

- All that is wrapped in a native OSX Window that allows for fullscreen, multi screen setup etc.

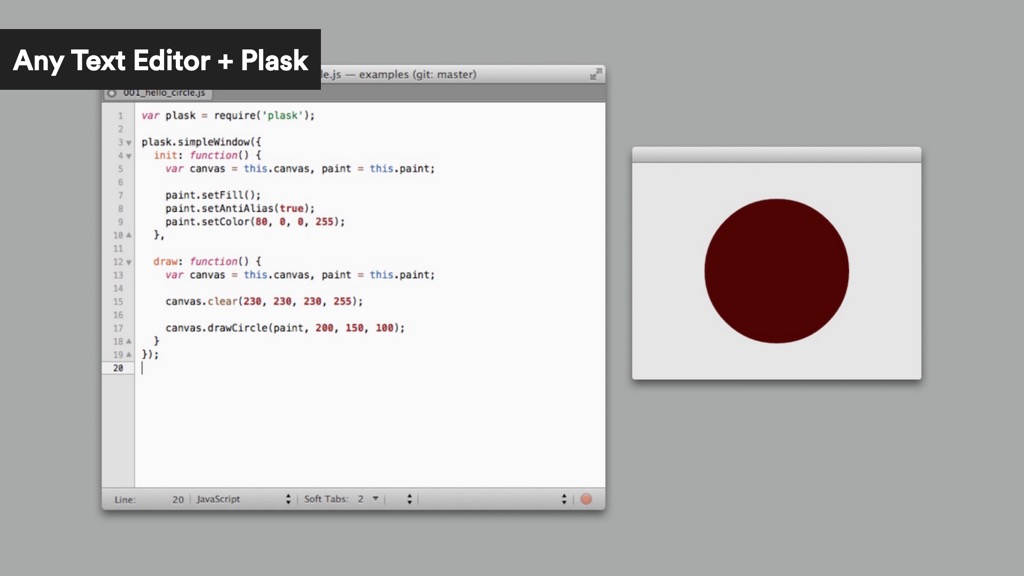

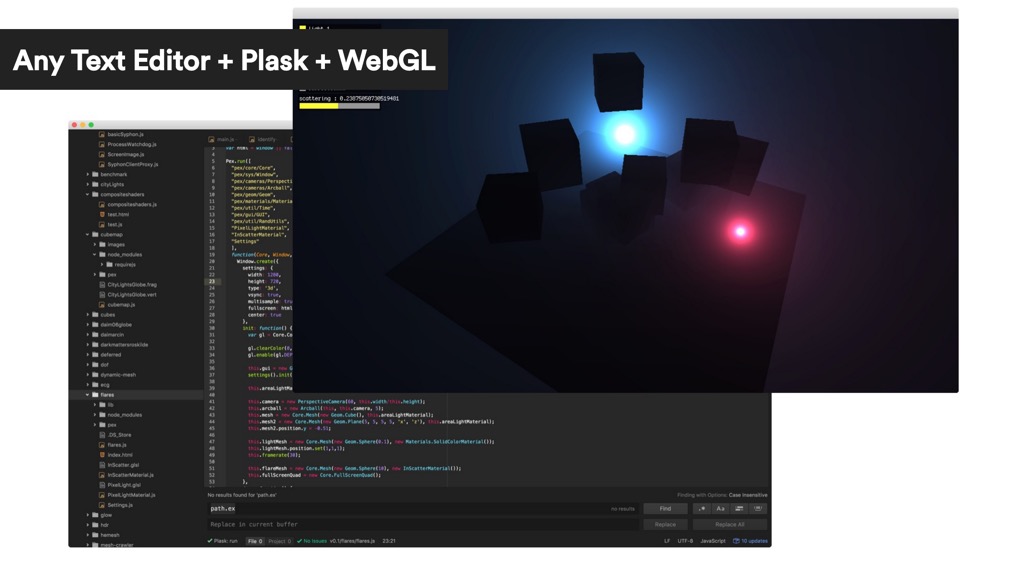

The way it all works is that you write you JS in a favourite text editor and they use Plask.app to run it for you. Starting from simple 2D shapes in Skia..

I started using Plask from the early days at CIID when Dean was still experimenting with the API. Plask comes with basic utils for 3d math and shaders compilation derived from Dean's work on Pre3d.js and then PreGL (that we developed while working on NinePointFive even before WebGL was in stable version of Chrome) but not much more.

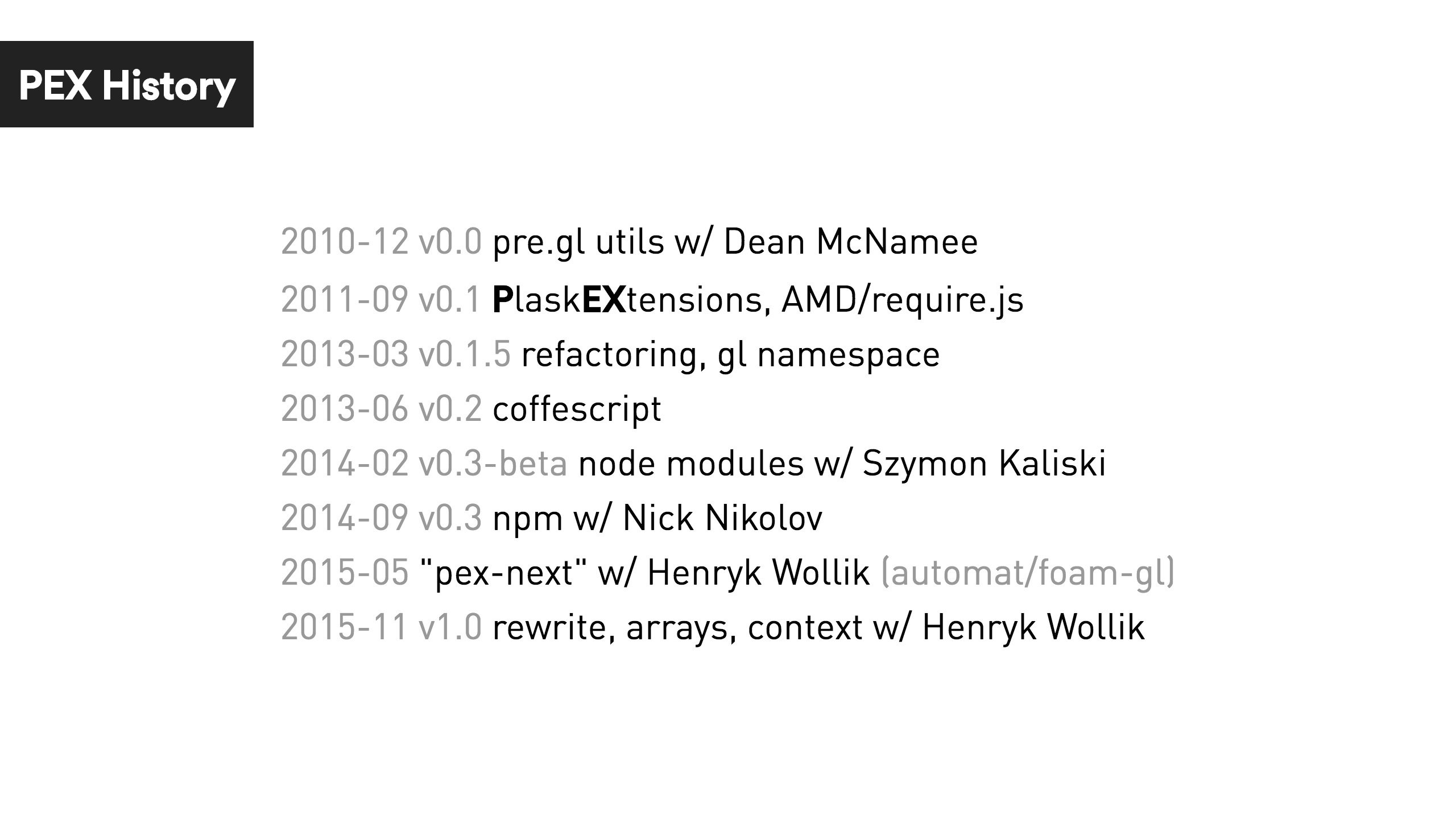

Quite quickly i realized that I want to develop my projects both for Plask and for the Browsers so PEX stands for PlaskEXtensions and aims to hide the differences between the different environments.

So far there was about 4 version of PEX. From single JS file to async modules with require.js, through a detour into coffeescript, rewrite back to JS and publishing on npm, to the current version that aims for even bigger modularity, interoperability with other libraries.

All these revisions wouldn't happen without : Dean McNamee, Szymon Kaliski, David Górny, Nick Nikolov and Henryk Wollik.

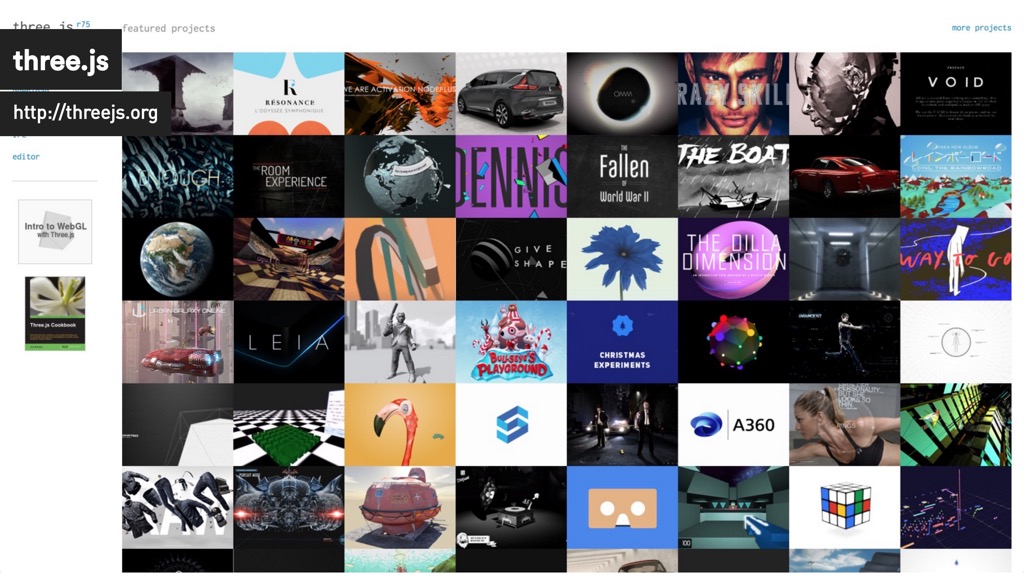

If you start comparing PEX with other WebGL libraries out there like three.js, PlayCanvas or babylonjs PEX resembles more a creative coding toolkit like Processing, openFrameworks or Cinder than a game engine. There are few smaller WebGL util libraries but the one with the biggest community: stack.gl shares a similar vision.

PEX started around the same time as ThreeJS but both libraries are at very different place now. Community is the strongest aspect of ThreeJS to the point where for many people ThreeJS == WebGL. While familiarity, ease of use, learning and finding support and browser compatibility are really important (and probably most important in production) the fact that ThreeJS is one big monolithic library has it downsides: complex interdependencies, constant changes with new versions, not easy to grasp implementation of core features etc. While three.js if often recommended to WebGL beginner in my opinion it wasn't the best way to understand how WebGL works under the hood.

It's changing though. Newest version has more modular rendering core and materials and things are going in the right direction.

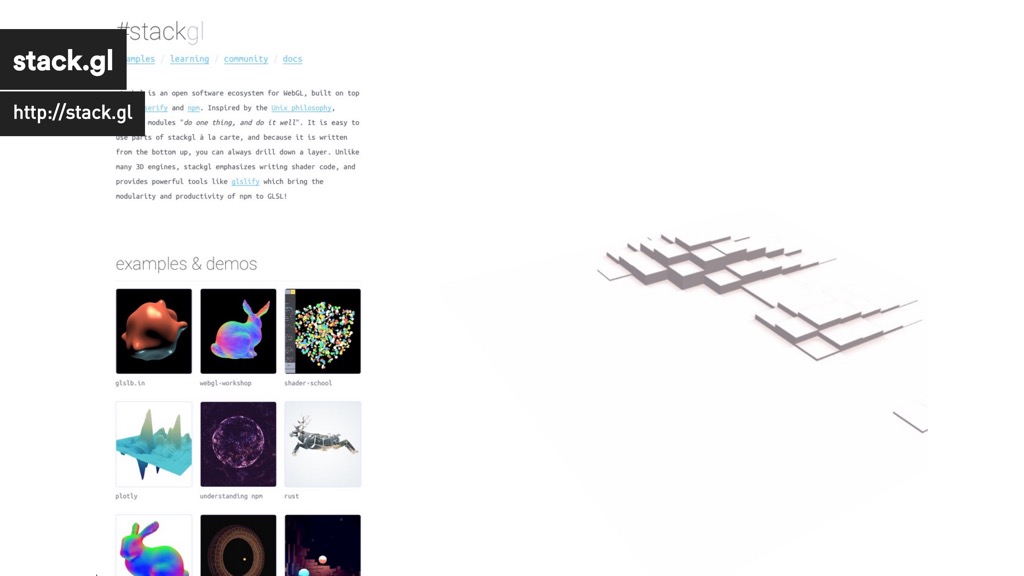

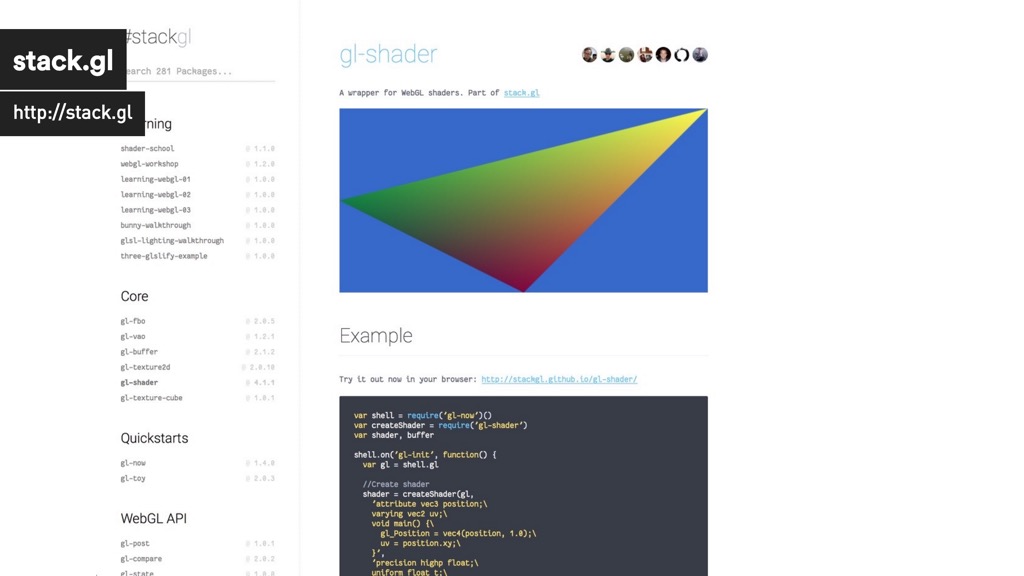

stack.gl is a complete opposite. Following the Unix philosophy stackgl is composed of micromodules that "do one thing, and do it well". That means you don't have mesh-geom-utils-kitchen-sink library but geometric primitives, model loading, computing normals or subdivision are all separated modules matched together for each project's needs.

In fact stack.gl at the time of writing there are 281 modules to choose from.

UPDATE: 295 now as of 2016/04/11

I like to place PEX somewhere in the middle as a collection of minimodules that while slightly coupled together provide common interface, convenience and avoid boilerplate (system and rendering core). Below that we have solid base of micromodules building on and contributing to the npm ecosystem (file format utils, shaders, geometry algorithms).

You can find some of the projects that PEX was used for at http://pex.gl.

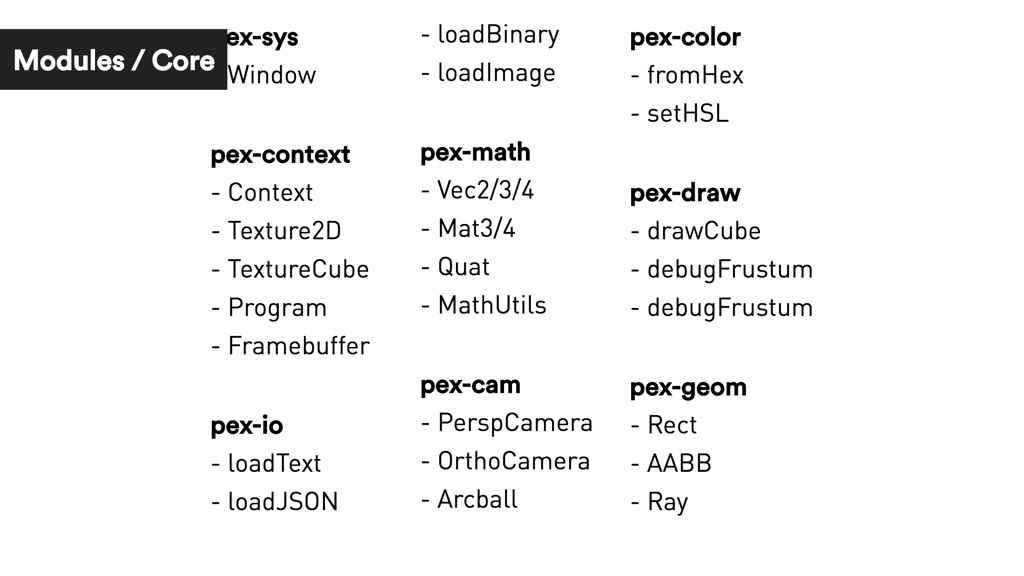

What are the core PEX modules then?

- pex-sys for creating OSX Window / Browser HTMLCanvas, getting GL context and handling mouse/keyboard events

- pex-contextgl context wrapper for crating textures and shaders, managing state state, extension loading etc

- pex-math - array based vector and matrix lib very similar to glMatrix but with more sane API

- pex-color - color manipulation utils

- pex-io util library for loading files in node.js and the browser

- pex-cam - basic 3d cameras and controllers

- pex-draw - debug draw utils for bounding boxes, vectors and frustums

- pex-geom - geometry utils

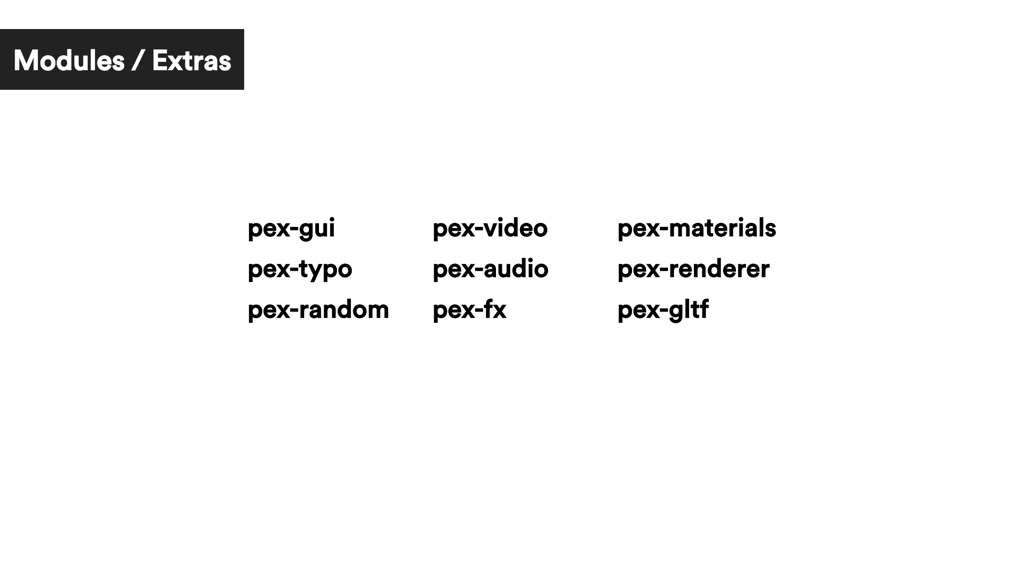

Extra modules for everyday convenience. GUI control toolkit, audio / video playback, random numbers, postprocessing, loading scene files and frequently used shader library.

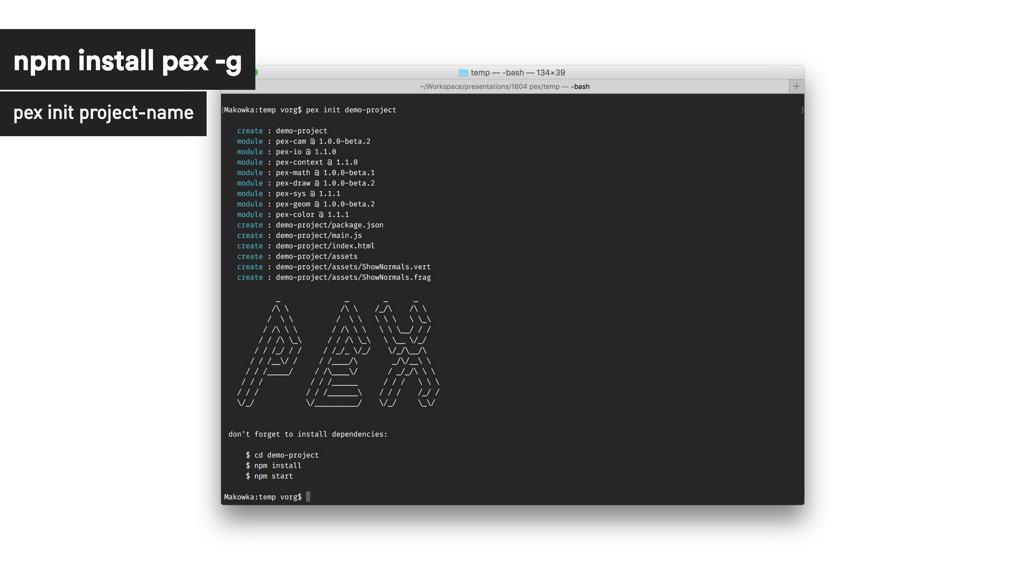

- Install NodeJS

- Install

pexcommand line tool

npm install -g pex

- Create new project

pex init project-name

- Install dependencies and run

cd project-name

npm install

npm start

Inside your project folder you will have main.js file with the app code, assets folder with a basic shader and node_modules with all your dependencies. npm start command will compile your code via browserify and open a browser for you.

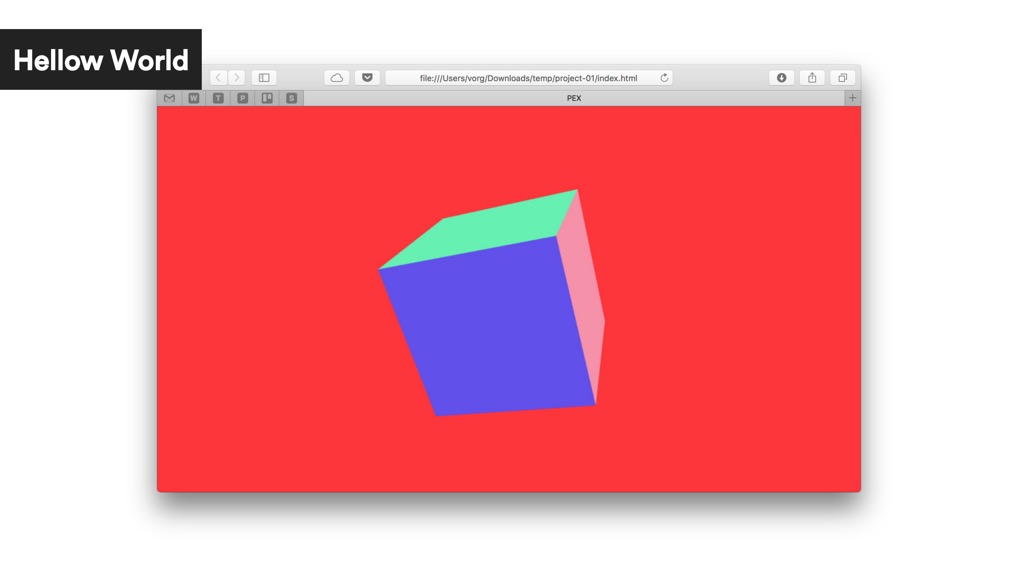

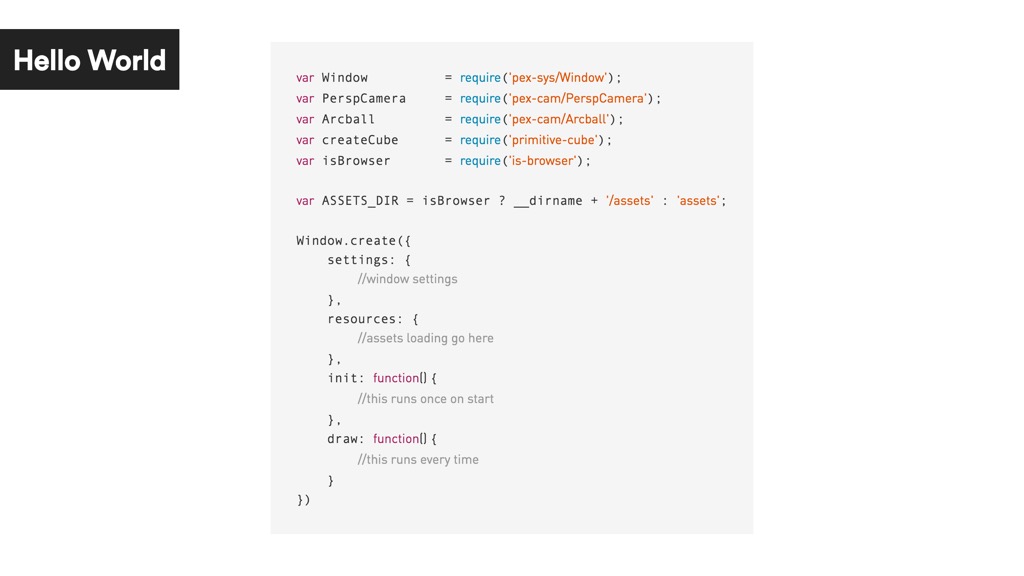

The overall structure code might look familiar to you if you are coming from Processing, openFrameworks or Cinder. We create a window and implement init() function that runs once on load and draw() that's run every frame.

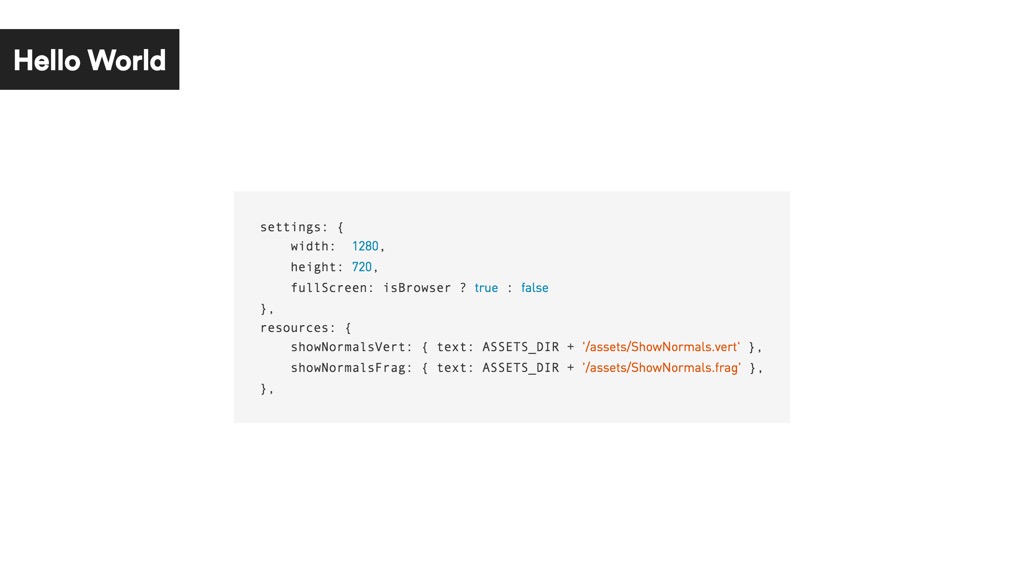

Settings property allows use to specify window size, fullscreen mode and pixel ratio for retina screens.

Resources are files to be preloaded before the init() function is called. You can use it for example to load your shaders and textures.

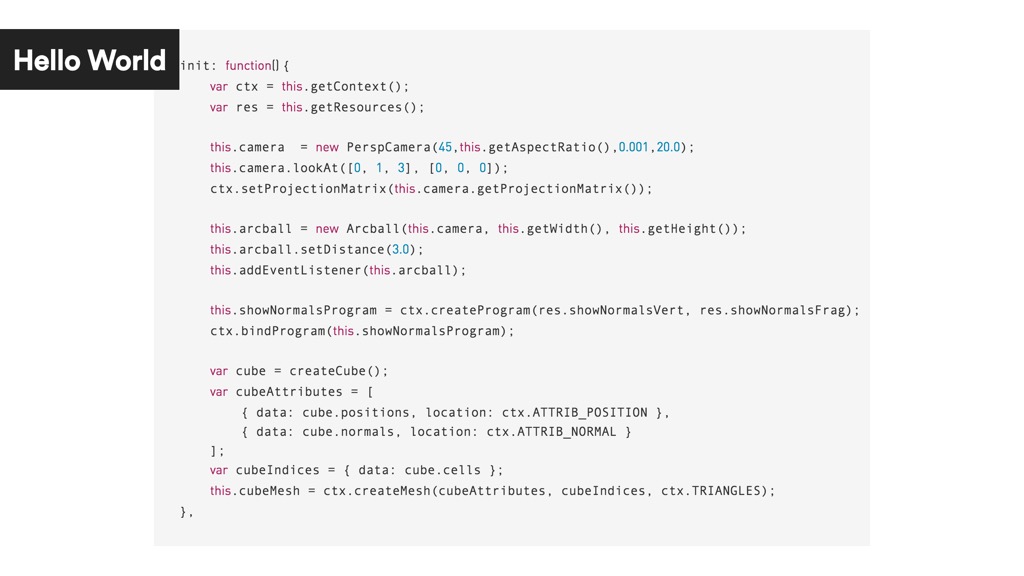

In the init function:

- we retrieve our gl context wrapper and resources

- create a 3d camera

- create an arcball controller for that camera

- create a shader program

- create cube and build a mesh from it's geometry

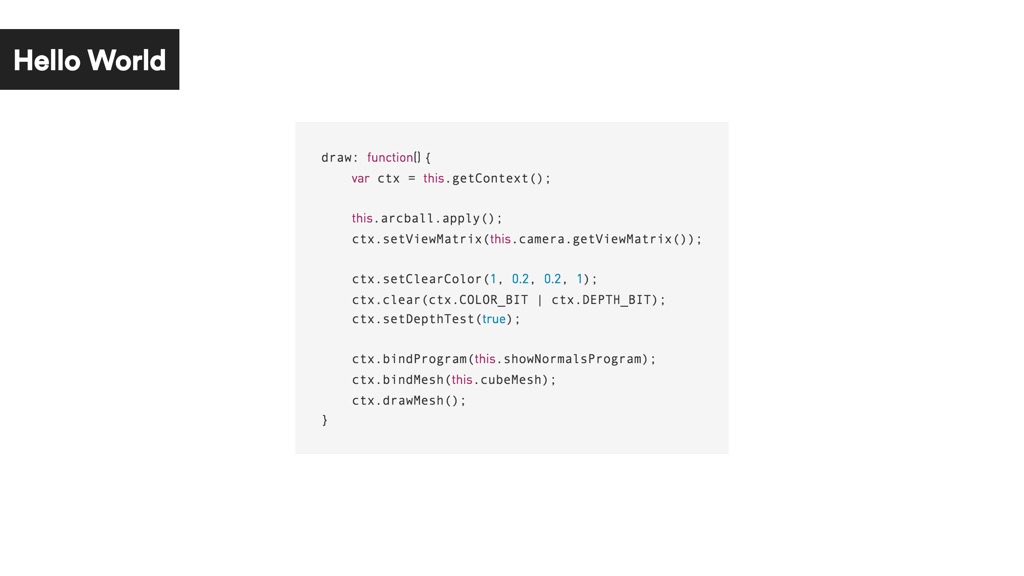

In the draw:

- we update arcball and our camera position based on the mouse movements from the last frame

- clear the screen with red color and enable depth testing

- set the current shader

- set the current mesh

- draw the mesh

Wow. That's a lot of code to draw a cube! Why bother with NodeJS?

- you get access to the whole npm ecosystem with thousands of modules

- you are not limited by the browser security sandbox (so it's easier to consume APIs, use sockets, serial ports, Midi, OSC)

- you have access to the file system (sync/async IO, streaming, caching, saving screenshots)

- all that is perfect for data processing (big CSV files, binary, data bases)

Let me show you some examples:

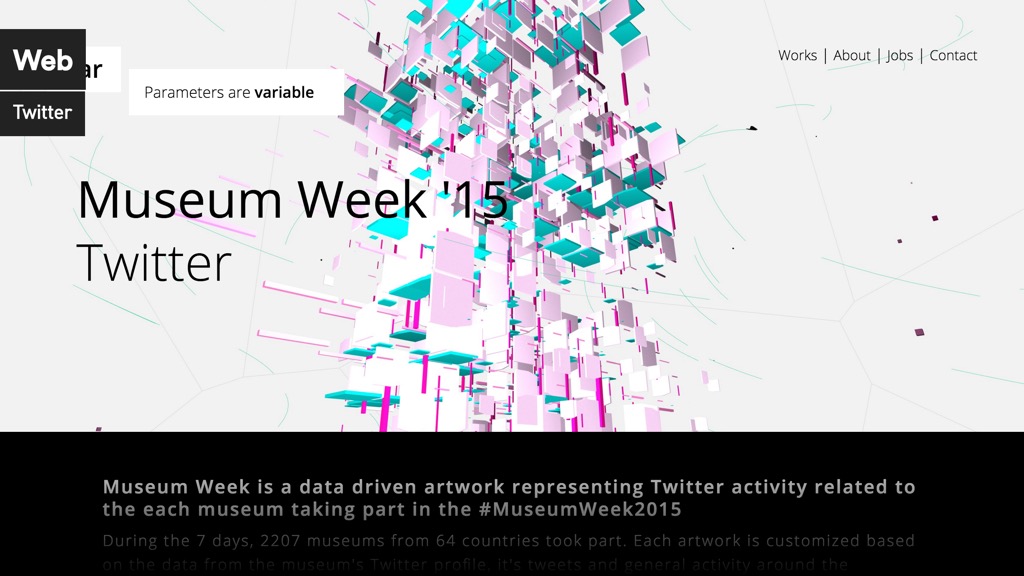

In the Museum Week we have build a generative artwork that was unique for each of the 2000 participating museums and connected to their Twitter activity.

OK, that was a normal web project.

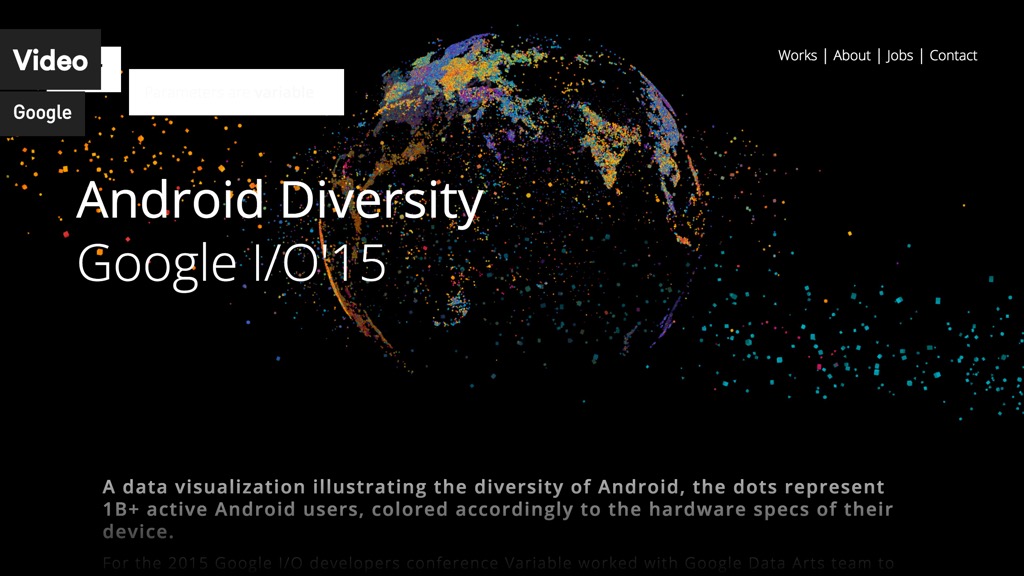

In Android Diverity use browser mode for prototyping and a desktop app to render video sequence at the resolution of 19278px × 900px that was displayed using 46 projectors during Google I/O'15.

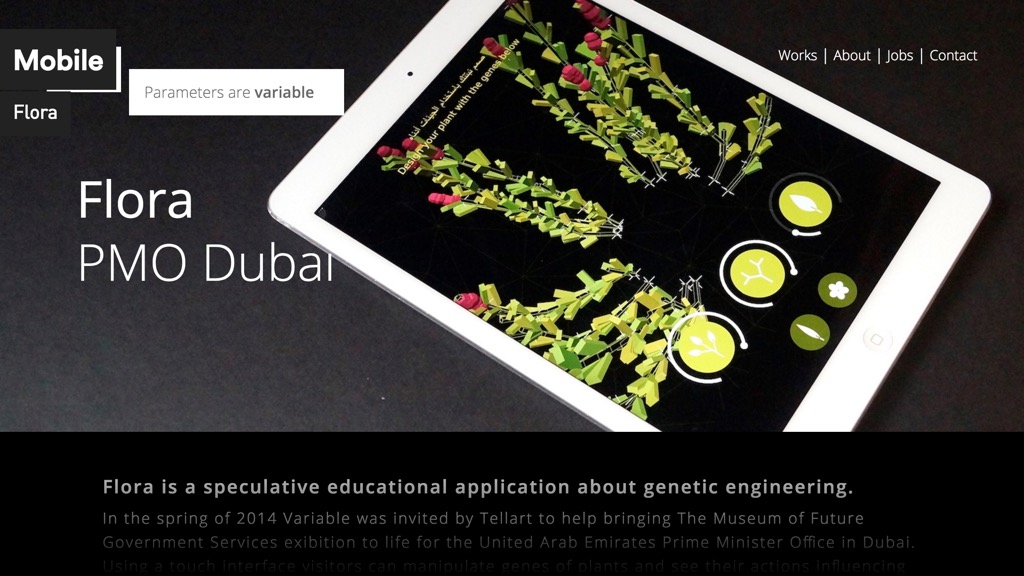

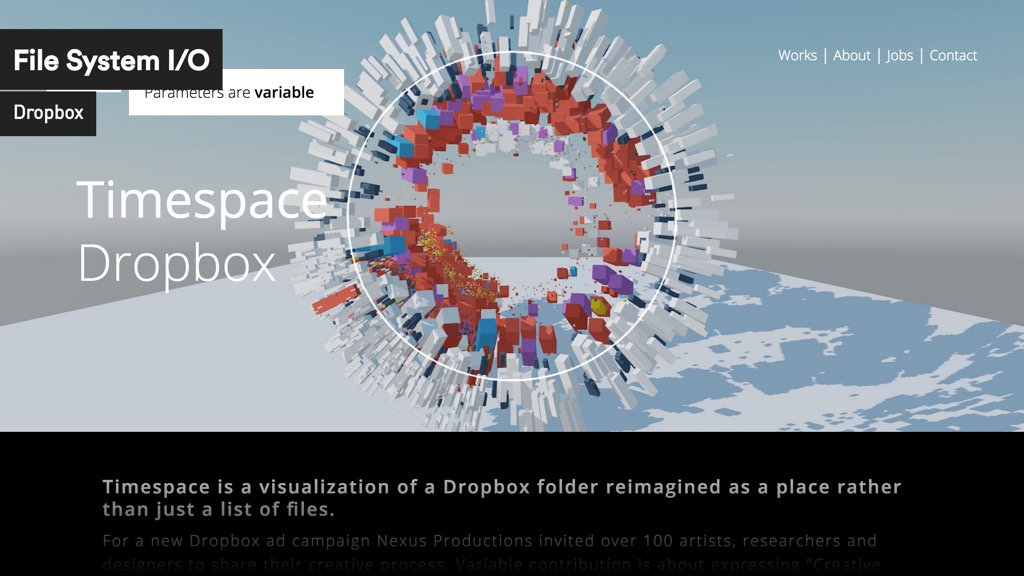

In Timespace we use file system access to scan you Dropbox folder and visualize it's file structure.

In UCC Organism we've build tools for map editing, server data visualization and run 20 living organisms across 20 ODroid devices running Android and Mac Minis.

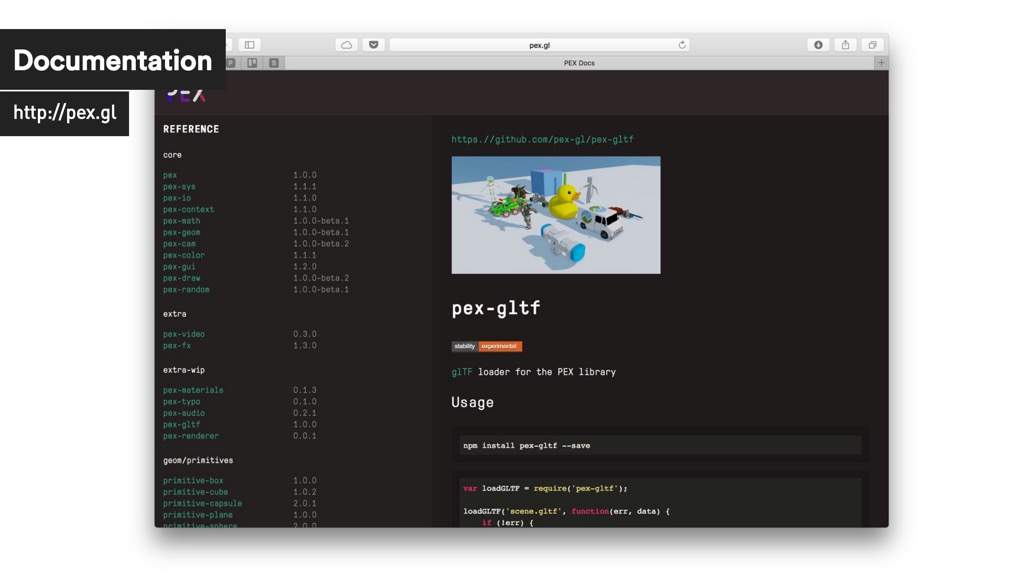

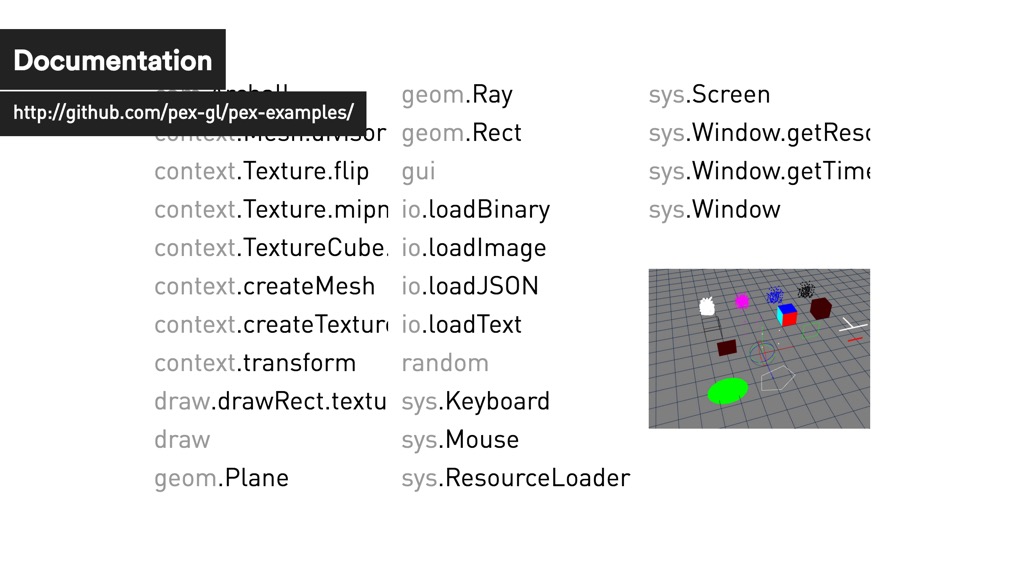

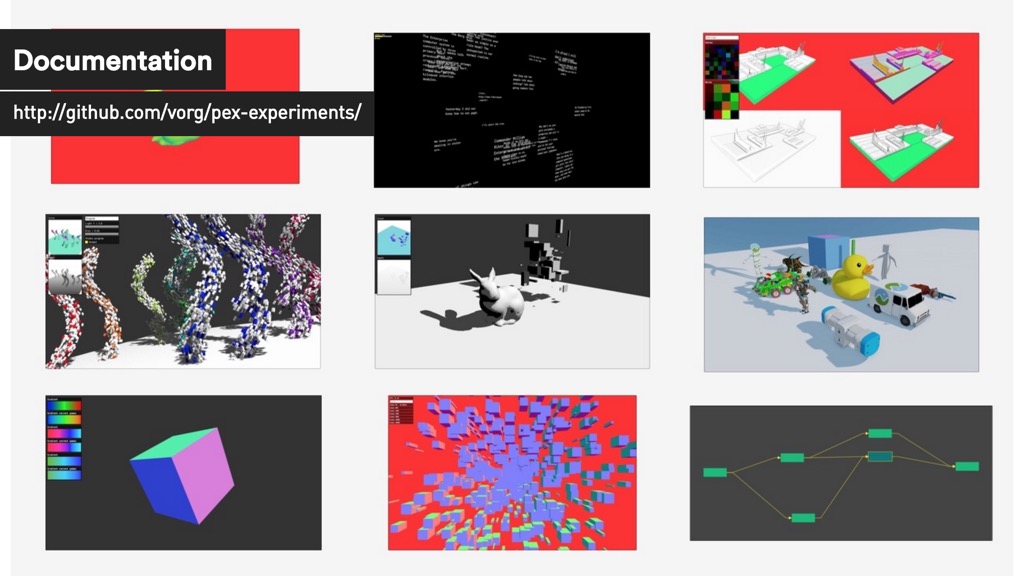

The documentation is still work in progress but you can check out the current version at http://pex.gl/docs/

A much better place to start is the pex-examples repository that has simple examples and visual tests that we use to verify that PEX is working correctly.

For more advanced examples see pex-experiments.

UPDATE: The glTF screenshot comes from pex-gltf/examples/all

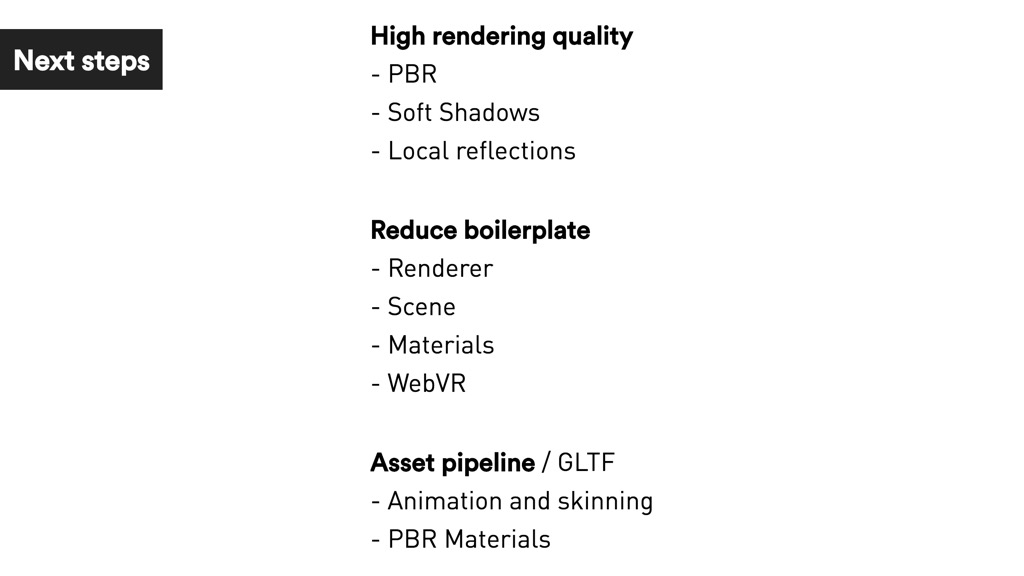

So what's next for PEX?

- high quality rendering in the core

- reducing boilerplate for the common tasks

- continue building asset pipeline on top of glTF

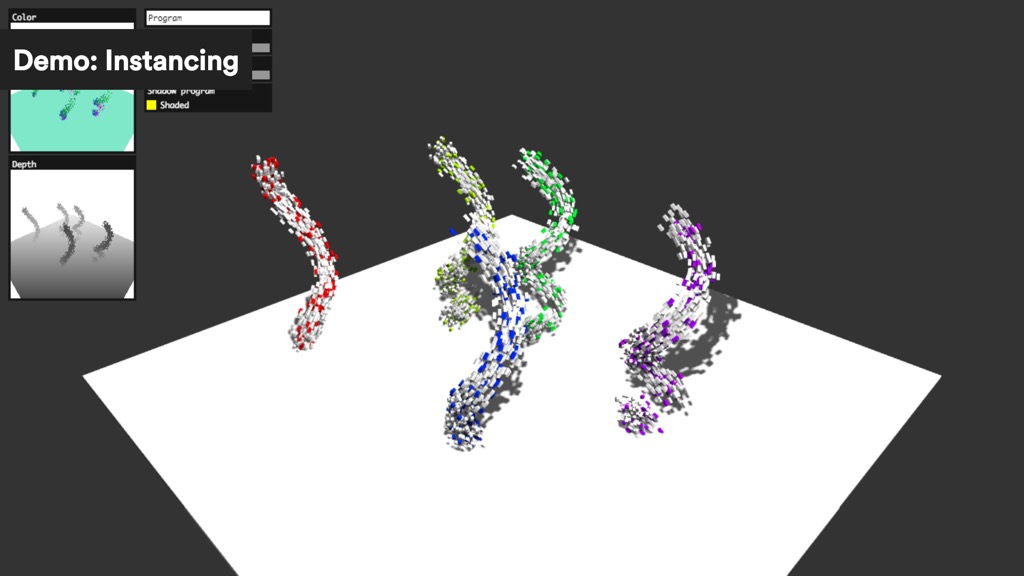

Shadowmaps and Instancing live demo.

Source at GitHub pex-experiments/instanced-stream.

glTF loader demo of all sample meshes from GitHub KhronosGroup/glTF. No live demo because of the meshes size but the source code is on GitHub pex-gl/pex-gltf/examples/all/

Parallax correct / local cubemap reflections live demo.

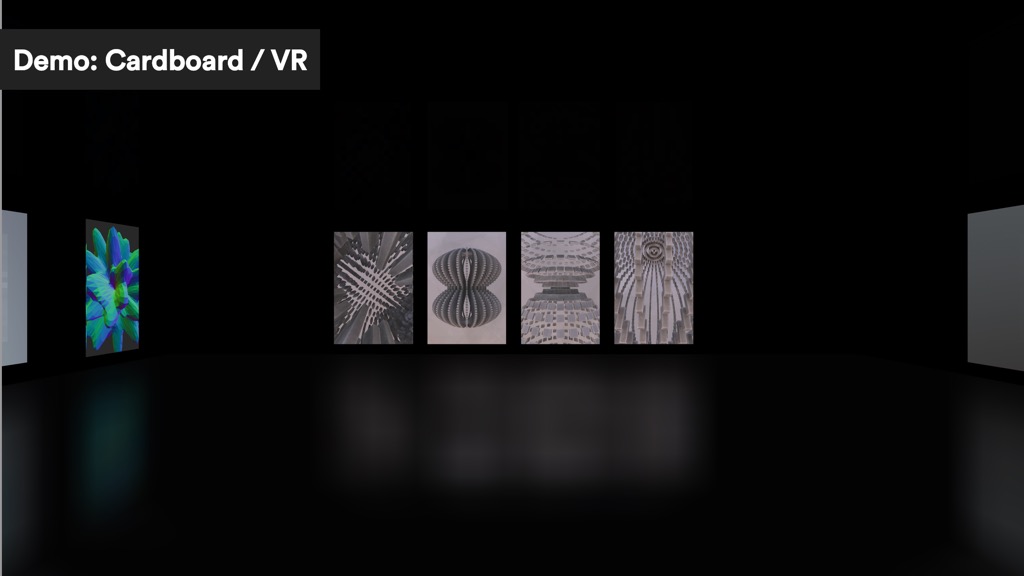

Test Cardboard v1 setup. Need to move to WebVR.

Source at GitHub pex-experiments/local-cubemap-reflections.

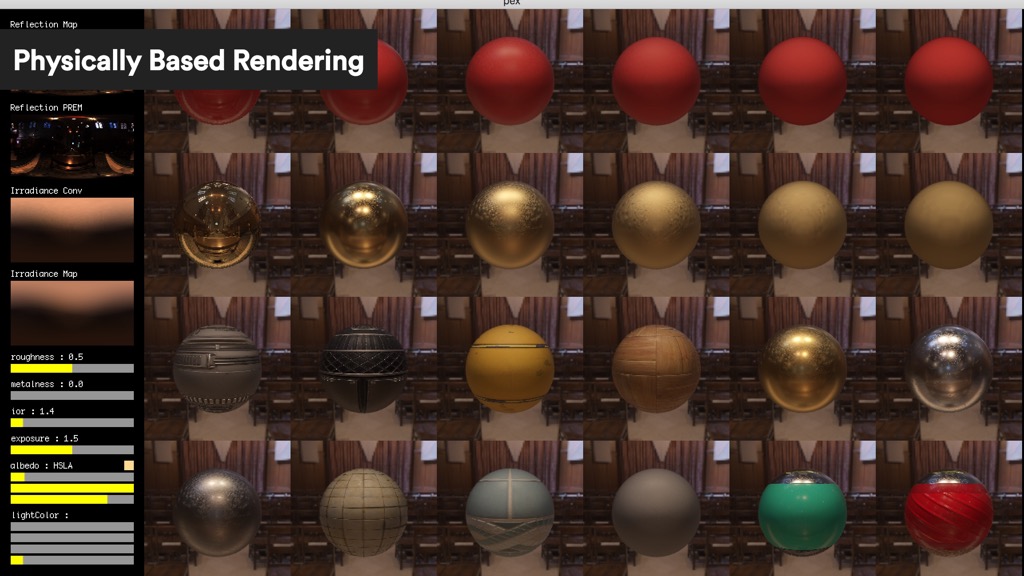

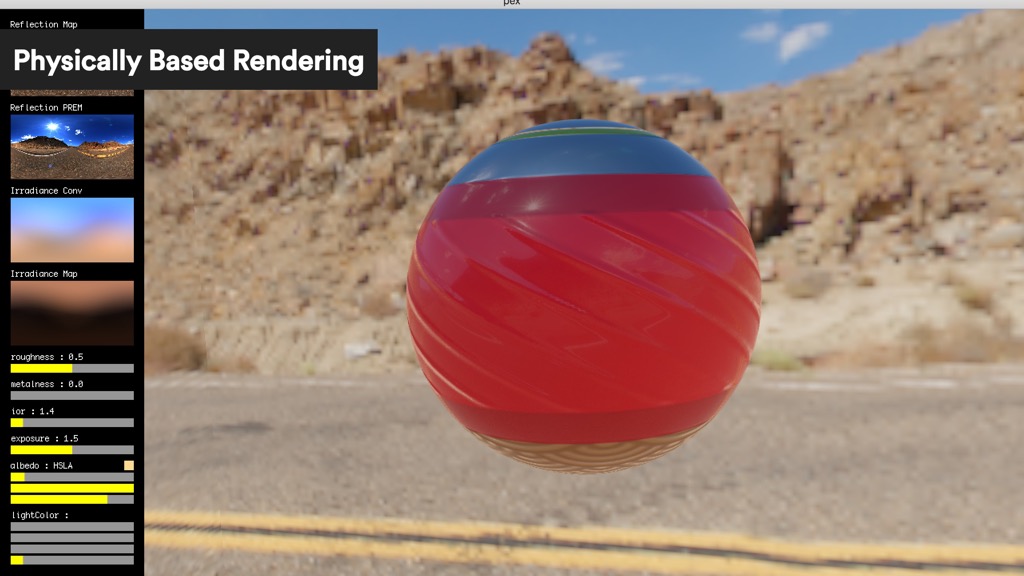

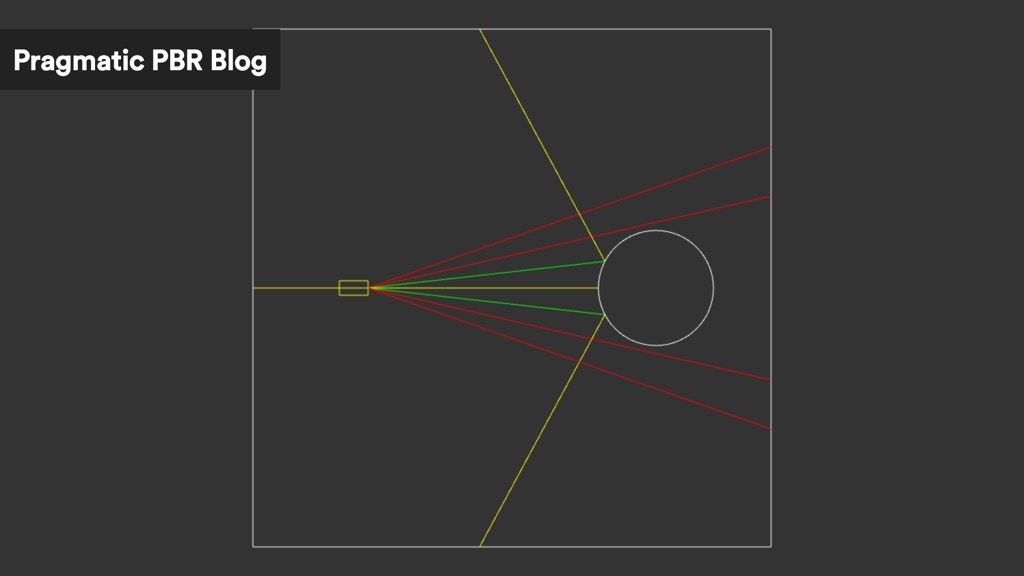

Physically based rendering allows for predictable material behaviour under different lighting conditions. The current implementation is based on Unreal Engine 4 with on the fly environment map convolution and prefiltering. I'm blogging about the process of implementing it in WebGL in the Pragmatic PBR blog series.

Example material with:

- metallic map (dielectric/plastic vs metal)

- roughness map defining reflection bluriness

- normal map for bump mapping, surface features

- constant reflectivity / Fresnel effect

PBR Face live demo.

This demo is use free model of Lee Perry-Smith's head now availabe for download from Computer Graphics Data lab under Creative Commons.

For the PBR implementation I took some time to understand the basics as I was frustrated to not being able to grasp the whole story from A-Z. There so many engine specific details and assumptions that most of the tutorials don't cover.

On this slide you can see my research mood board done in Sketch with all the presentations, blog posts and material I could find in order to understand the differences and connections between implementations and PBR models.

Here is example of how reflections work with live demo and full description at Pragmatic PBR : HDR post.

I always wanted PEX to be also about sharing knowledge and what I learned in the process.

PEX grows with every project and every experiment. I hope some people will be able to learn from it and grow too, just as this PEX logo inspired by Karsten Schmidt/Toxi and his Type & Form breakthrough 3D print from 2008.

Thank you!

For future updates:

http://pex.gl |

http://github/pex.gl |

@marcinignac |

@variable_io